Fine-tune Mixtral-8x7B Quantized with AQLM (2-bit) on Your GPU

A surprisingly good and efficient alternative to QLoRA for fine-tuning very large models

Mixtral-8x7B is one of the best open LLMs. It is also very challenging to fine-tune it on consumer hardware. The model occupies 96.8 GB of memory when fully loaded. Fine-tuning would require even more memory to store the optimizer states and training batches. For instance, an H100 GPU with 80 GB of RAM wouldn’t be enough.

In this situation, QLoRA with 4-bit quantization is an appealing solution. It divides the model size by 4 while reducing the size of the optimizer states by fine-tuning only a LoRA adapter on top of the model.

Yet, even with QLoRA, we still need 32 GB of GPU memory to fine-tune Mixtral-8x7B. I tried it in this article:

But what if we could fine-tune Mixtral-8x7B quantized to a lower precision?

For instance, we can quantize Mixtral-8x7B with AQLM to 2-bit with minimal degradation of the model’s performance. But are AQLM models easy to fine-tune?

In this article, I show how to fine-tune Mixtral-8x7B quantized with AQLM using only 16 GB of GPU RAM. In other words, we only need a $500 GPU to fine-tune Mixtral. I also discuss how to optimize the fine-tuning hyperparameters to further reduce memory consumption while maintaining a good performance. To my surprise, fine-tuning a 2-bit Mixtral is fast and may yield an even better model than with QLoRA while using twice less memory.

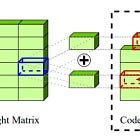

I focus on Mixtral in this article but you can apply the same steps to any models quantized with AQLM. If you want to know how AQLM works and how to quantize a model with it, I wrote an article about it here:

My notebook showing how to fine-tune a 2-bit Mixtral-8x7B is available here: